November 2023

Features

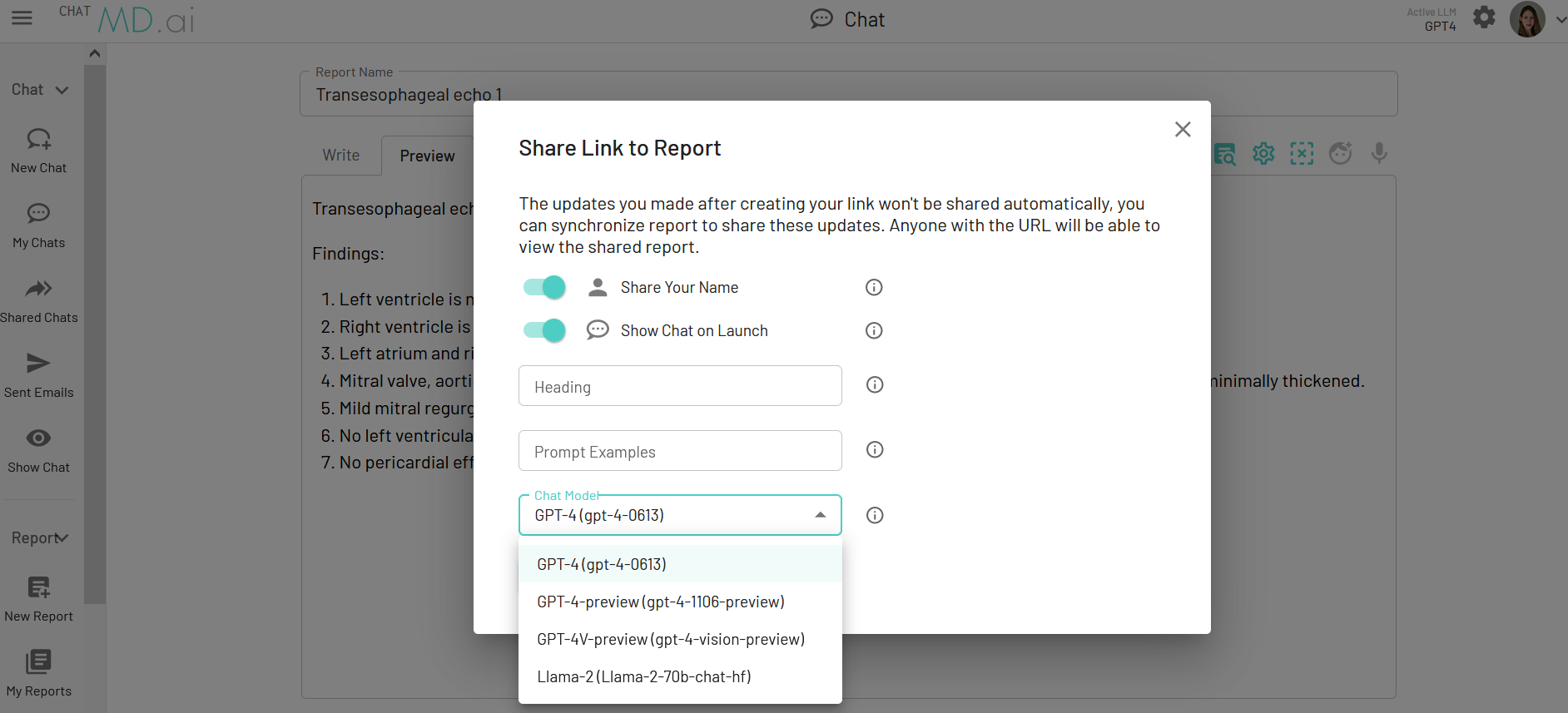

Large Multimodal Model Integration

MD.ai Chat combines large language models including GPT-4, GPT-4V, and Llama 2 with reporting tools to leapfrog into the future of medical AI workflows. For example, use GPT-4 with Vision to interpret report images, or create prompts of greater depth with the 128k context window (the equivalent to 300 pages of text) with GPT-4 Turbo.

Report De-ID and Entity Extraction

Utilize clinical LLMs in a seamless workflow to deidentify data. In addition to report deidentification, MD.ai provides a comprehensive suite of De-ID tools including support for DICOM pixel redaction and deidentification of DICOM tags.

Optimized UI for Mobile Devices

The mobile device UI provides an optimized and effective UI for convenient usage of reporting and chat across all device types.

New Reporting Features

New reporting features include optional customizable report headers, iframe support, and automated impression generation.

Additional Video Features

New Annotator video features include a frame_number sorting option, distinct instance sort options for regular DICOM, multi-frame DICOM, and video, and default frame_number sorting when new active series is multi-frame or video.

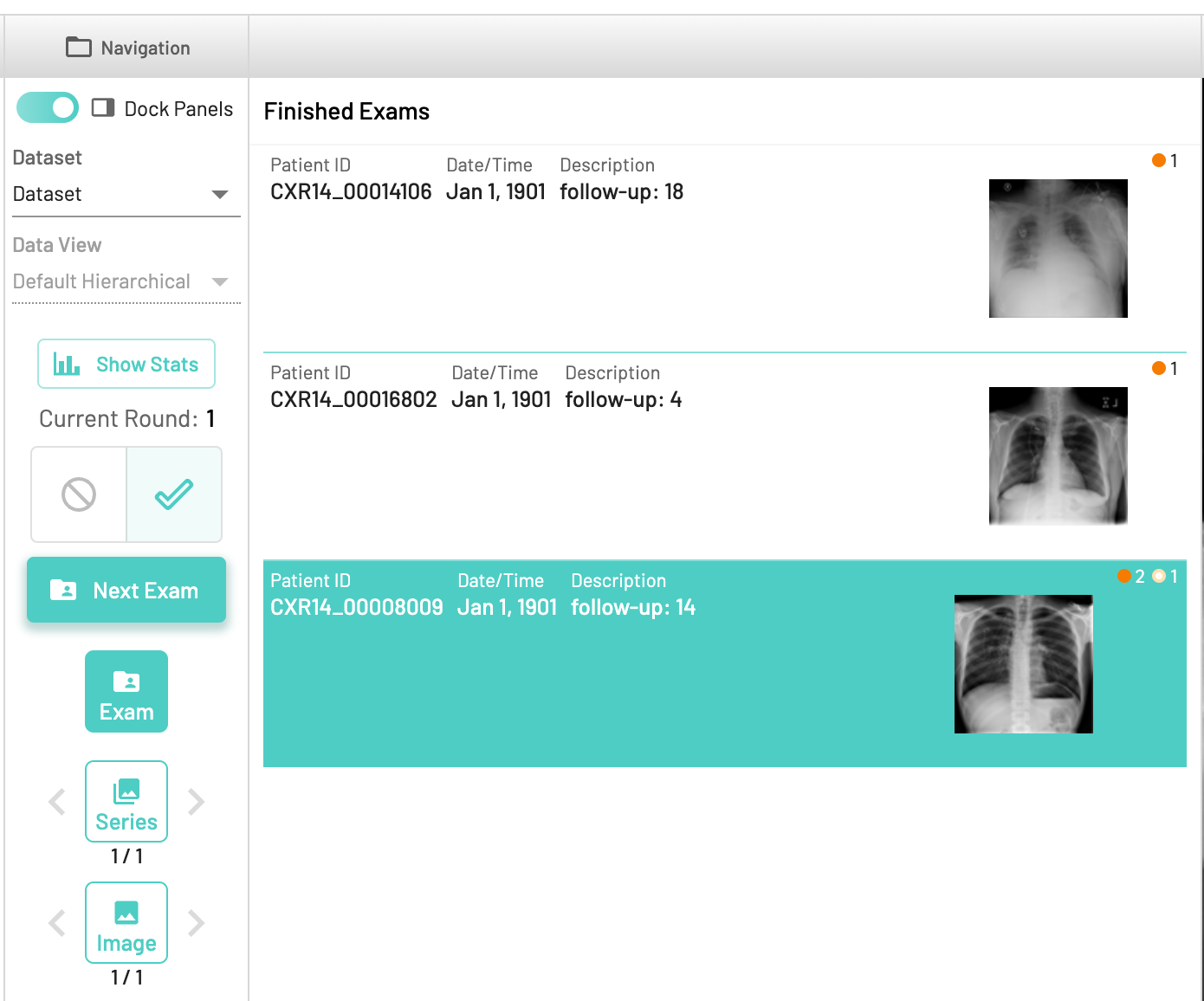

Crowdsourcing Mode History Feature

Annotators can now return to previously annotated exams while annotating in crowdsourcing mode.

Bug Fixes

- Updated dictation mappings in reporting for more intuitive user experience.

- Fixed bug in audio transcription would fail to process if input too short.

- Updated chat system prompt behavior so that new chats will not contain previous system prompts.

- Fixed bug causing account avatars to be blocked.

- Fixed bug preventing report launch from iPads with specific popup block settings.

- Fixed chat layout sizing on iPad.

- Fixed bug causing unnecessary vertical scroll bar.

- Fixed bug in cache during live transcription.

- Fixed bug that was preventing DICOM push.

- Fixed bug causing unreliable inferring of email addresses.

- Fixed code block formatting during export to PDF.

- Fixed bug in formatting of reports that contained Markdown links.

- Fixed bug to prevent duplicate model tasks when running model inference tasks.

- Resolved bug where resource label indicators were not respecting project user blinded flag.